-

The Internet is About to Become WAY Faster

Earlier this week, the big news in the tech space surrounded the completion of the HTTP/2 Spec. Gibberish, gibberish, gibberish. What does this mean for the internet? The short version is: it’s about to become way faster. Faster is extremely important if you want to make money from traffic to your site. Or, you know, if…

-

Platforms vs. Products: Google Does Some Soul Searching

A rant from a Googler comparing Amazon’s focus on a platform vs Google’s focus on products. Fundamental.

-

Rules for Entrepreneurs: Compete and Collaborate

Photo by Roger Barker on Flickr. Google and Apple are not only competitors… they are collaborators. Indeed, Apple and Google both offer top level smartphones – The iPhone from Apple and the assortment of Android devices by Google (Google not only has its own phones but is the main proprietor of the Android open source…

-

Make the Web, Cloud Do Your Work So You Don’t Have To

Photo by Balleyne While perusing around the web yesterday (after sifting through my email post-vacation), I came across this Ars Technica article discussing the new Firefox upgrade timeline. It actually follows a similar upgrade timeline that WordPress adopted after WordPress 2.0 was released. The new policy outlines a 3-4 month window for new major releases with…

-

I’m Pro Choice. I’m Android.

We in the tech world are a fickle bunch. On one side of our brain, we scream about openness and freedoms. We verbally disparage anyone who would dare mess with our precious Internet freedoms. Many of us, especially in my WordPress community, swear allegiance to licensing that ensures data and code exchanges on open standards.

-

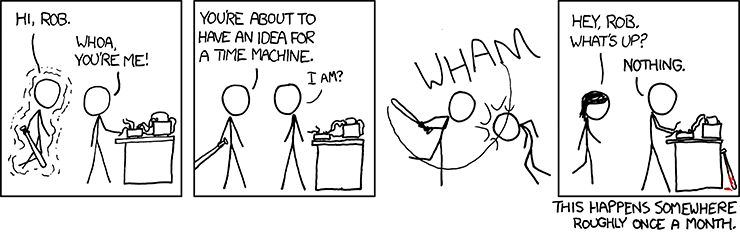

Doers & Talkers: Cultivating Innovation

A few years ago, I wrote a post called Doers and Talkers where I profiled two types of people in the technology space: Those who have ideas and are visionaries (or talkers) and those who implement those ideas on behalf of others (the doers). I looked back at that post and realized that, while correct,…

-

Allow me to Complain…

Festivus was the other day, the traditional day that people “air their grievances”. Since I did not do that but I seem to be a bit fired up today, I’m going to separate from the normal informative, intellectual articles that would normally go up here, and instead rant a bit. Because there are a lot…

-

Google Chrome OS: A lot to do about Nothing

Google is known for doing many things right. Despite giving them a hard time over the years, it’s undeniable that my life still revolves, in a very real way, around Google products. I use Gmail not only for, er, Gmail but I use Google Apps to run all my email services including my public aaron@technosailor.com…

-

How Location Based Services Saved My Life

Sitting here in Automattic offices in San Francisco, I find myself lovingly caressing my Blackberry which, for a short time yesterday, I believed was separated from me for good. Turns out I lost it the night before and was having phantom spasms over not having it in my pocket to check email, twitter or do…

-

Changing the Currency of Influence via Search

There is no doubt that Google is the king of search but how did they become that way? In the old days (you know, before PageRank was dubbed irrelevant), the idea was that the number of links to a site, particularly by more “powerful” sites increased the relevance of an indexed page in the Google…